Chapter 7: The Bot Wars

If somebody had told me, during the early Shell promotions, that one day I would spend part of my old age feeding forty years of corporate history into artificial intelligences, I would have assumed they needed lying down in a darkened room.

Yet that is where the story arrived.

I should admit the oddity of it at once. This campaign began in the era of meetings, paper files, telephones, telexes, witness statements and petrol-station promotions. It has ended up in an age where one can put the same question to several machine systems at once and watch them produce overlapping, contradictory, evasive or unexpectedly candid accounts of the same underlying dispute. It is absurd. It is funny. It is faintly dystopian. It is also, from my point of view, useful.

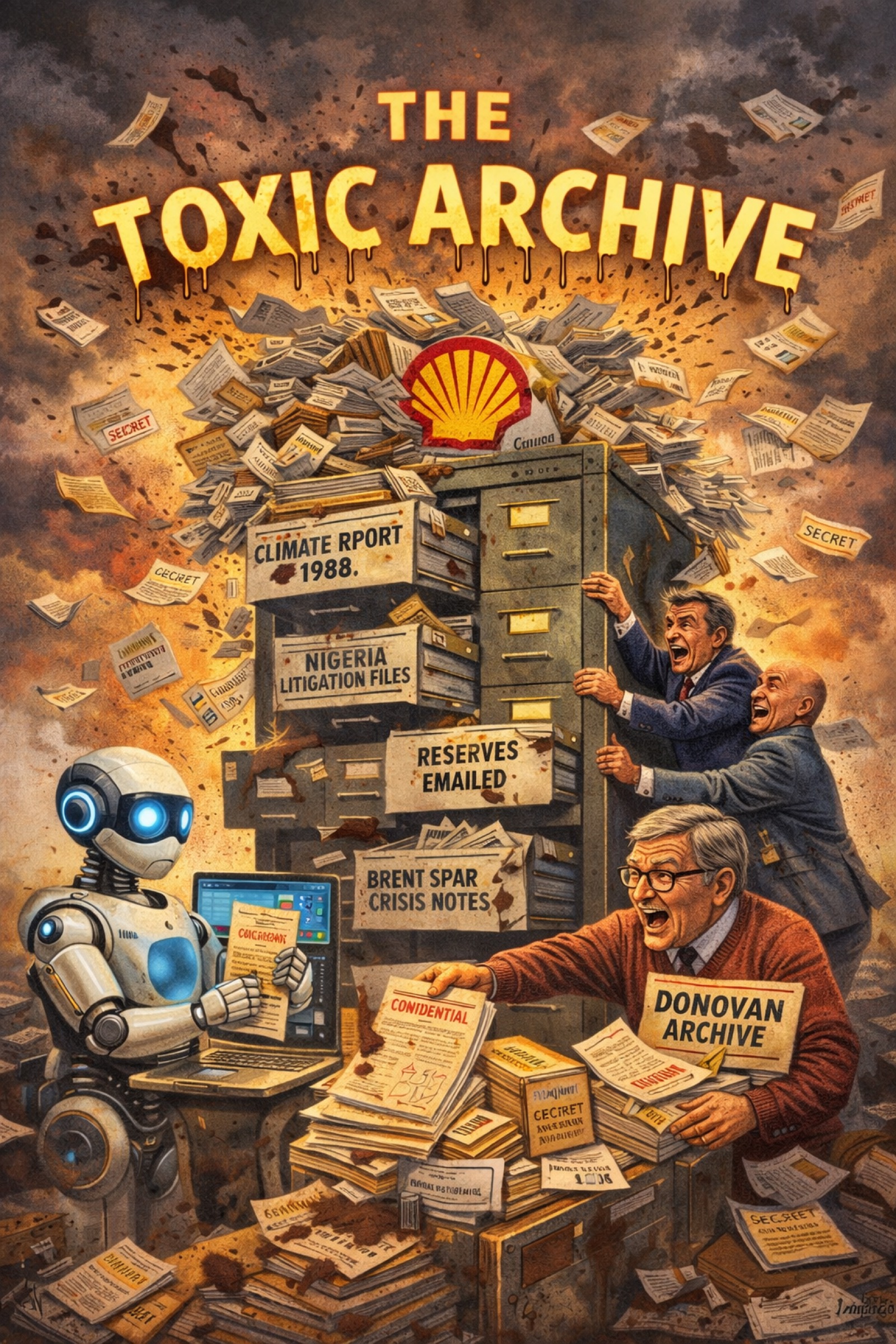

The idea came to me in the way many of my ideas have come over the decades: suddenly and without ceremony. Why not ask the machines? Why not give ChatGPT, Copilot, Grok, Perplexity and whichever other silicon oracle happens to be fashionable the same material, or near enough the same material, and see what they did with it? Why not compare the answers? Why not publish the comparisons? Why not make visible, in public, the strange new fact that even artificial intelligence cannot quite smooth away the afterlife of this dispute?

That was the beginning of what I came to think of as the bot wars.

The phrase should not be taken too grandly. No one was launching missiles from a shed in Essex. The bots were not combatants in any literal sense. They were tools, mirrors, amplifiers and, sometimes, accidental comedians. But the tactic was real enough. I would pose similar questions to multiple systems about Shell, about my history with the company, about the archive, about legal episodes, about allegations, about public scandals, about the relationship between my websites and Shell's reputation, and then compare how each system handled the terrain. Sometimes they agreed. Sometimes they hedged. Sometimes one would say plainly what another wrapped in managerial cotton wool. Sometimes a model would rediscover old journalism that deserved to be remembered. Sometimes it would hallucinate nonsense. Even the mistakes could be revealing.

The first system I used seriously in this way was ChatGPT, and I owe that, in turn, to Nick Gill. By then Nick had been part of my life for roughly thirty years. We had first met because of that old advert for a computer whiz kid, and in the years since he had become both my IT adviser and a close friend. It was Nick who later drew my attention to ChatGPT, explained what it could do and helped me go on using not just that platform but the others that followed. So even this ultra-modern phase of the story has roots in an older act of faith in one clever young person answering an advert.

What impressed me first was not some grand philosophical revelation. It was practical magic. I could ask ChatGPT to create articles about Shell and, provided I was careful with the prompt, it would produce something useful in seconds rather than the far longer time it would take me to draft manually. I made one rule clear from the beginning: it must be truthful and must not invent false facts. That had always been my own position anyway. It is probably one reason why, after all these years of battling Shell, I have not found myself successfully sued for libel. The machines were only welcome in my campaign on the understanding that they served the same caution.

I should be careful here. AI output is not evidence in the same sense as a document, a letter, a court paper, a contemporaneous article or an authenticated internal email. A chatbot is not a witness and certainly not a judge. These systems remix existing information. They guess. They blur. They fill gaps with confidence. They are capable of regurgitating an old truth and a fresh error in the same paragraph. Anyone using them seriously must keep both hands on the railing.

But precisely because they are imperfect, they expose something interesting about the modern information environment. If you feed them decades of public reporting, archived pages, leaked material, corporate statements, legal history and commentary, they do not produce a single stable official narrative. They produce a struggle over narrative. They reveal what has entered the record strongly enough to survive. They reveal what remains disputed. They reveal how a long-suppressed or long-mocked story can suddenly become machine-readable and therefore newly difficult to bury.

In my case the archive mattered enormously. Without the decades of preservation, there would have been much less for the machines to chew on. The bot war was not magic. It was a late dividend from old labour. All those years of keeping papers, publishing material, building websites, preserving correspondence, recording episodes and refusing to let memory be tidied away by corporate convenience had created a digital body of evidence and narrative. Artificial intelligence did not create that body. It merely began ingesting it.

The real shift came when I stopped using AI as a fast drafting tool and started using it comparatively. Being creative, I tried the same or similar material across multiple systems and watched how the answers differed. I looked for agreement, for contradiction, for evasions, and especially for hallucinations. Then I turned the comparisons themselves into publishable material. That was the excitement of it. I felt I was doing something nobody else was doing, and the old part of me that has always been an ideas man was suddenly alive again. The archive was no longer static. It could be mixed with current news, republished in altered form, picked up by other systems, and returned to the internet as fresh fuel. In the simplest terms, I had realised that these large public AI systems treat the internet rather like a weighted archive. If you dominate enough of that archive, you can begin to shape what the machines say back.

One of the odder features of this phase is that the machines sometimes described the tactic rather well themselves. In January 2026, Grok called it a masterclass in digital persistence. I would not want to lean too heavily on the compliment, not least because it came from yet another machine rather than a flesh-and-blood critic or admirer. Even so, the phrase was apt. What I was doing was not simply asking clever questions. It was persistence, iteration, republication and comparison, carried out often enough that the archive stopped behaving like dead storage and started behaving like a living pressure system.

So the bot wars are not just a gimmick. They dramatise a serious change in power. For most of my struggle, if a company wished to contain a story, it could lean on the usual bottlenecks: editors, publishers, lawyers, broadcasters, institutional caution, fatigue, expense, passage of time. Now there is another arena in which the story can recur. Ask a question often enough, in enough systems, against enough archived material, and the dispute reappears. Perhaps clumsily. Perhaps incompletely. But it reappears. The old hope that time alone would bury everything becomes harder to sustain.

There is also a theatrical pleasure in it, and I would be hypocritical to deny that. After so many years of being lectured, threatened, legalised, patronised or dismissed, it is satisfying to watch machine systems struggle to give neat answers about Shell and the Donovan feud. One bot leans cautious. Another is blunter. A third reproduces the tone of a nervous corporate lawyer. A fourth seems almost to enjoy the scandal. They do not merely answer. They expose the fact that the underlying material is awkward.

That awkwardness is part of the strategy. When multiple systems, using different training mixes and different guardrails, converge on the view that there is a real, long-running, reputationally damaging history here, that matters as public theatre even if one must still verify every factual proposition by more conventional means. And when the systems diverge, that matters too, because the divergence shows where the record is muddy, where advocacy has outpaced proof, where legal finality collides with moral dissatisfaction, and where the modern internet turns everything into a contest of summaries. The machines, in their own clumsy way, ended up explaining my innovation back to me: what had once been man versus corporation had become archive plus AI versus corporate silence.

In that sense the bot war is partly experiment and partly pressure tactic. I have never pretended otherwise. It is experiment because I genuinely want to see what these systems will surface, distort, omit or rediscover. It is pressure because the outputs can then be published into the same online ecosystem that I have been building for years. What was once a file in a drawer became a web page. What was once a web page became training material. What was once training material becomes, in effect, a fresh public conversation. Shell is not confronting only my memory now. It is confronting the possibility that my archive has entered the bloodstream of machine-mediated public knowledge.

That helps explain why even a renewed legal threat, or the suggestion of one, feels different in this environment. In earlier years, a threat could intimidate by targeting a document, a publication, a person, a host, a domain, a meeting. In the AI era the terrain is more diffuse. The archive exists in multiple places. The commentary exists in multiple places. The models ingest fragments from everywhere. Even if a company objects to a particular post or formulation, the broader problem remains: the history has become distributed. One cannot easily send a stiff letter to the whole future.

None of this means Shell is helpless. Large companies still possess money, lawyers, influence and patience. They still benefit from public fatigue and the tendency of outsiders to assume that any dispute which lasts this long must be, in equal measure, both sides' fault. Artificial intelligence does not dissolve corporate power. In some ways it may reinforce it. Models can be timid. They can understate. They can flatten moral complexity into bland neutrality. They can treat deep asymmetries as a polite disagreement between parties. I have seen that too.

But even that can be instructive. When a model reaches too quickly for symmetry, one begins to see how modern discourse protects large institutions by default. When it retreats into lawyerly vagueness, one is reminded how much of public language is built to spare power embarrassment. When it does the opposite and blurts out something surprisingly candid, one sees that the accumulated record still has force. The machines are not wise, but they are diagnostic.

There is another point too. It shows that my campaign still has a stopping condition. The bot war is not being waged because I enjoy spending my later years in an electronic funhouse. It exists because Shell has still not, in my view, done the one simple thing that might have ended so much of this long ago: negotiate seriously, apologise properly, and make fair redress. The machines have not changed that. They have merely given the unresolved nature of the story a new stage on which to perform.

That, to my mind, is the real meaning of the bot wars. They are not a new dispute at all. They are the latest proof that the old dispute never truly died.

I ask the machines because the paper trail is there. The paper trail is there because the fight never ended. And the fight never ended because Shell, as I see it, preferred the long cost of attrition to the smaller cost of moral repair.

That is a peculiar thing to discover at my age. It is even more peculiar to discover it in conversation with bots.

Yet here we are.